PTS 2024: Lisbon

Almost exactly a year since the last Perl Toolchain Summit, it was time for the next one, this time in Lisbon. Last year, I wrote:

In 2019, I wasn’t sure whether I would go. This time, I was sure that I would. It had been too long since I saw everyone, and there were some useful discussions to be had. I think that overall the summit was a success, and I’m happy with the outcomes. We left with a few loose threads, but I’m feeling hopeful that they can, mostly, get tied up.

Months later, I did not feel hopeful. They were left dangling, and I felt like some of the best work I did was not getting any value. I was grouchy about it, and figured I was done. Then, though, I started thinking that there was one last project I’d like doing for PAUSE: Upgrading the server. It’s the thing I said I wanted to do last year, but barely even started. This year, I said that if we could get buy-in to do it, I’d go. Since I’m writing this blog post, you know I went, and I’m going to tell you about it.

PAUSE Bootstrap

Last year, Matthew and I wanted to make it possible to quickly spin up a working PAUSE environment, so we could replace the long-suffering “pause2” server. We were excited by the idea of starting from work that Kenichi Ishigaki had done to create a Docker container running a test instance. We only ended up doing a little work on that, partly because we thought we’d be starting from scratch and didn’t know enough Docker to be useful.

This year, we decided it’d be our whole mission. We also said that we were not going to start with Docker. Docker made sense, it was probably a great way to do it, but Matthew and I still aren’t Docker users. We wanted results, and we felt the way to get them was to stick to what we know: automated installation and configuration of an actual VM. We pitched this plan to Robert Spier, one of the operators of the Perl NOC and he was on board. I leaned on him pretty hard to actually come to Lisbon and help, and he agreed. (He also said that a sufficiently straightforward installer would be a good starting point for turning things into Docker containers later, which was reassuring.)

At Fastmail, where Matthew and I work, we can take every other Friday for

experimental or out-of-band work, and we decided we’d get started early. If

the installer was done by the time we arrived, we’d be in a great position to

actually ship. This was a great choice. Matthew and I, with help from

another Fastmail colleague, Marcus, wrote a program. It started off life as

unpause, but is now in the repo as bootstrap/mkpause. You can read the

PAUSE Bootstrap

README if you

want to skip to “how do I use this?”.

The idea is that there’s a program to run on a fresh Debian 12 box. That

installs all the needed apt packages, configures services, sets up Let’s

Encrypt, creates unix users, builds a new perl, installs systemd services, and

gets everything running. There’s another program that can create that fresh

Debian 12 box for you, using the DigitalOcean API. (PAUSE doesn’t run in

DigitalOcean, but Fastmail has an account that made it easy to use for

development.)

I think Matthew and I worked well together on this. We found different rabbit

holes interesting. He fixed hard problems I was (barely) content to suffer

with. (There was some interesting nonsense with the state of apt locking and

journald behavior shortly after VM “cloud init”.) I slogged through testing

exactly whether each cron job ran correctly and got a pre-built perl

environment ready for quick download, to avoid running plenv and cpanm

during install.

Before we even arrived, we could go from zero to a fully running private PAUSE server in about two and a half minutes! Quick builds meant we could iterate much faster. We also had a script to import all of PAUSE’s data from the live PAUSE. It took about ten minutes to run, but we had it down to one minute by day two.

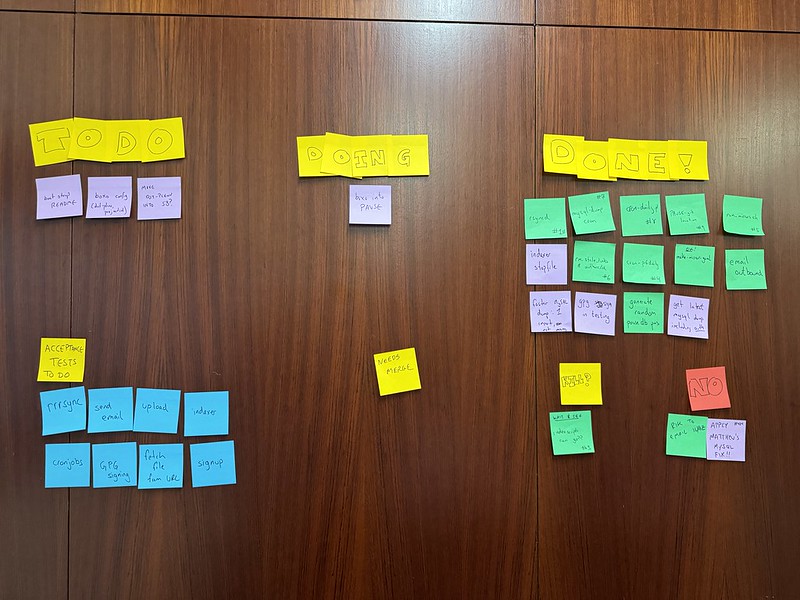

When we arrived, I took my todo and threw it up on the wall in the form of a sticky note kanban board.

We spent day one re-testing cron jobs, improving import speed, and (especially) asking Andreas König all kinds of questions about things we’d skipped out of confusion. More on those below, but without Andreas, we could easily have broken or ignored critical bits of the system.

By the end of day two, we were confident that we could deploy the next day. I’d hoped we could deploy on day two, but there were just too many bits that were not quite ready. Robert had spent a bunch of time running the installer on the VM where he intended to run the new production PAUSE service, known at the event as “pause3”. There were networking things to tweak, and especially storage volume management. This required the rejiggering of a bunch of paths, exposing fun bugs or incorrect assumptions.

The first thing we did on day three was start reviewing our list of pre-deploy

acceptance tests. Did everything on the list work? We thought so. We took

down pause2 for maintenance at 10:00, resynchronized everything, watched a lot

of logs, and did some uploads. We got some other attendees to upload things to

pause3. Everything looked good, so we cut pause.perl.org over to pause3. It

worked! We were done! Sort of.

We had some more snags to work through, but it was just the usual nonsense. A service was logging to the wrong place. The new MySQL was stricter about data validation than the old one. An accidental button-push took down networking on the VM. Everything got worked out in the end. I’ll include some “weird stuff that happened” below, but the short version is: it went really smoothly, for this kind of work.

On day four, we got to work on fit and finish. We cleaned up logging noise, we applied some small merge requests that we’d piled up while trying to ship. We improved the installer to move more configuration into files, instead of being inlined in the installer. Also, we prepared pull requests to delete about 20,000 lines of totally unused files. This is huge. When trying to learn how an older codebase works, it can be really useful to just grep the code for likely variable names or known subroutines. When tens of thousands of lines in the code base are unused, part of the job becomes separating live code out from dead code, instead of solving a problem.

We also overhauled a lot of documentation. It was exciting to replace the long and bit-rotted “how to install a private PAUSE” with something that basically said “run this program”. It doesn’t just say that, though, and now it’s accurate and written from the last successful execution of the process. You can read how to install PAUSE yourself.

Matthew, Robert, and I celebrated a successful PTS by heading off to Belém Tower to see the sights and eat pastéis.

I should make clear, finally, that the PAUSE team was five people. Andreas König and Kenichi Ishigaki were largely working on other goals not listed here. It was great to have them there for help on our work, but they got other things done, not documented in this post!

Here’s our kanban board from the end of day four:

Specifics of Note

run_mirrors.sh

This was one of the two mirroring-related scripts we had to look into. It was bananas. Turns out that PAUSE had a list of users who ran their own FTP servers. It would, four times a day, connect to those servers and retrieve files from them directly into the users’ home directories on PAUSE.

Beyond the general bananas-ness of this, the underlying non-PAUSE program in

/usr/bin/mirror no longer runs, as it uses $*, eliminated back in v5.30.

Rather than fix it and keep something barely used and super weird around, we

eliminated this feature. (I say “barely used”, but I found no evidence it was

used at all.)

make-mirror-yaml.pl

The other mirror program! This one updated the YAML file that exposes the CPAN mirror list. Years ago, the mirror list was eliminated, and a single name now points to a CDN. Still, we were diligently updating the mirror list every hour. No longer.

rrrsync

You can rsync from CPAN, but it’s even better to use

rrr. With rrr, the

PAUSE server is meant to maintain a few lists of “files that changed in a given

time window”. Other machines can then synchronize only files that have changed

since they last checked, with occasionally full-scan reindexes.

We got this working pretty quickly, but it seemed to break at the last minute.

What had happened? We couldn’t tell, everything looked great, and there were

no errors. Eventually, I found myself using strace against perl. It turned

out that during our reorganization of the filesystem, we’d moved where the

locks live. We put in a symlink for the old name, and that’s what rrr was

using… but it didn’t follow symlinks when locking. Once we updated the

configuration to use the canonical name and not the link, everything worked.

Matthew said, “You solved a problem with strace!” I said, “I know!” Then we

high fived and got back to work.

I was never happy with the symlinks we introduced during the filesystem reorganization, but I was happy when I eliminated the last one during day four cleanup!

the root partition filled up

We did all this work to keep the data partition capable of growth, and then /

filled up. Ugh.

It turned out it was logs. This wasn’t too much of a surprise, but it was

annoying. It was especially annoying because we decided early on that we’d

just accept using journald for all our logging, and that should’ve kept us

from going over quota.

It turned out that on the VM, something had installed a service I’d never heard

of. Its job was to notice when something wanted to use the named syslog

socket, and then start rsyslogd. Once that happened, we were double-logging

a ton of stuff, and there was no log rotation configured. We killed it off.

We did other tuning to make sure we’d keep enough logs without running out of space, but this was the interesting part.

Future Plans

We have some. If nothing else, I’m dying to see my pull request 405 merged. (It’s the thing I wrote last year.) I have a bunch of half-done work that will be easier to finish after that. But the problem was: would this wait another year?

We finished our day — just before heading off to Belém — by talking about development between now and then. I said, “Look, I feel really demotivated and uninterested if I can’t continuously ship and review real improvements.” Andreas said, “I don’t want to see things change out from under me without understanding what happened.”

The five of us agreed to create a private PAUSE operations mailing list where we’d announce (or propose) changes and problems. We all joined, along with Neil Bowers, who is an important part of the PAUSE team but couldn’t attend Lisbon. With that, we felt good about keeping improvements flowing through the year. Robert has been shipping fixes to log noise. I’ve got a significant improvement to email handling in the wings. It’s looking like an exciting year ahead for PAUSE! (That said, it’s still PAUSE. Don’t expect miracles, okay?)

Thanks to our sponsors and organizers

The Perl Toolchain Summit is one of the most important events in the year for Perl. A lot of key projects have folks get together to get things done. Some of them are working all year, and use this time for deep dives or big lifts. Others (like PAUSE) are often pretty quiet throughout the year, and use this time to do everything they need to do for the year.

Those of us doing stuff need a place to work, and we need to a way to get there and sleep, and we’re also pretty keen on having a nice meal or two together. Our sponsors and organizers make that possible. Our sponsors provide much-needed money to the organizers, and the organizers turn that money into concrete things like “meeting rooms” and “plane tickets”.

I offer my sincere thanks to our organizers: Laurent Boivin, Philippe Bruhat, and Breno de Oliveira, and also to our sponsors. This year, the organizers have divided sponsors into those who handed over cash and those who provided in-kind donation, like people’s time or paying attendee’s airfare and hotel bills directly. All these organizations and people are helping to keep Perl’s toolchain operational and improving. Here’s the breakdown:

Monetary sponsors: Booking.com, The Perl and Raku Foundation, Deriv, cPanel, Inc Japan Perl Association, Perl-Services, Simplelists Ltd, Ctrl O Ltd, Findus Internet-OPAC, Harald Joerg, Steven Schubiger.

In kind sponsors: Fastmail, Grant Street Group, Deft, Procura, Healex GmbH, SUSE, Zoopla.

Breno especially should get called out for organizing this from five thousand miles away. You never could’ve guessed, and it ran exceptionally smoothly. Also, it meant I got to see Lisbon, which was a terrific city that I probably would not have visited any time soon otherwise. Thanks, Breno!